Our point estimate (in this case, 2.1) is estimated using our data, and thus, can’t be numerically identical to the true parameter (in this case, 2) that is associated with a population. This is because any data sampled from a population, being only a sample, cannot encapsulate entirely what is going on in the population. But the point estimate for this parameter is about 2.1 (formula in the top left corner of the plot), not exactly 2. See bonus section 1.įor example, if we were to look at the linear model above, the true slope value is 2, because that is how we generated the data through a formula.

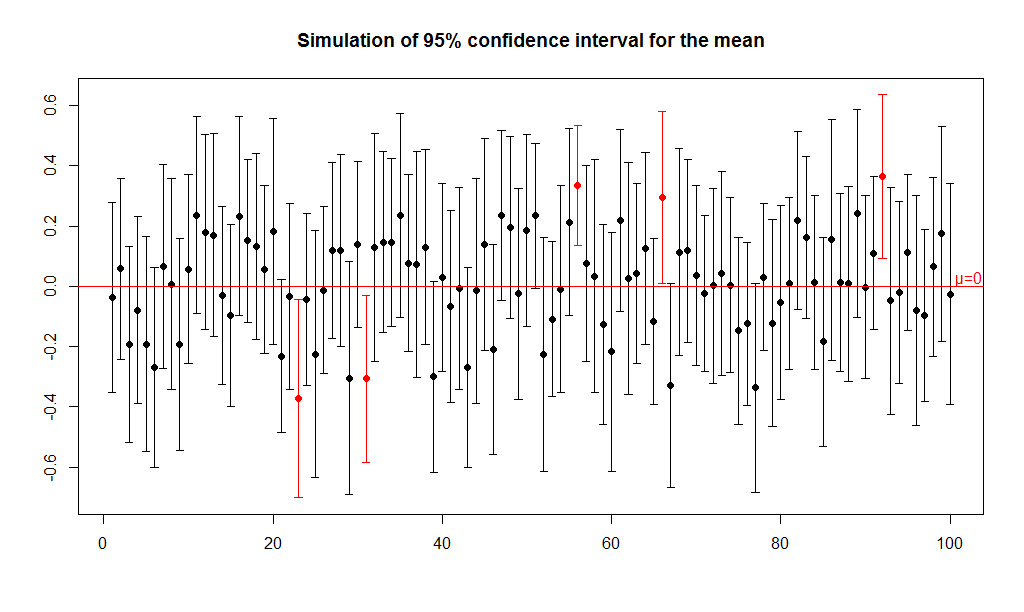

*The word “probability” is to be interpreted using a frequentist approach. We can replace the “95%” with any other percentage that we can think of, though it is rare for us to consider something below 90%. Ggpubr::stat_regline_equation() # `geom_smooth()` using formula 'y ~ x'ĭefinition: if we are asked to construct a 95% CI for a parameter, then the probability* that this CI will contain the true population parameter value is 95%. I also choose to use the option se = FALSE to suppress the visualisation of the (confidence) interval as we will do this manually later. We will also use the geom_smooth(method = "lm") function from the ggplot2 package to add the (simple) linear regression line. This idea of a “true” value is not always possible if we use real data. As much as I would like to use real data to add real-world relevance, generating data with a known value means that we are allowed to discuss how good our estimates are compared to the “true” value. Suppose we have an independent variable ( \(x\)) and a dependent variable ( \(y\)) and we are asked to produce a linear regression.įor simplicity, I will generate some data with \(X \sim N(2,0.2^2)\), \(\epsilon \sim N(0, 0.2^2)\) and \(y = 1 + 2*x + \epsilon\). I will attempt to minimise the need for mathematical derivations and use intuitive language and simulations to illustrate the subtle differences between these two concepts. The statements above are of course extreme simplifications of these statistical concepts. The primary intention is to capture future data points.

PI shows the variability in individual data points.

The primary intention is to understand the variability in the model. In short:ĬI shows the variability in parameter estimates. This blog post explains the main statistical differences between CI and PI in a linear regression model through visualisations. These terms are not always rigorously defined and used, sometimes even in reputable sources (I would also add tolerance intervals here as well, but perhaps for another day). The difference between the interpretation of CI and PI is actually a great example of how very similar mathematical constructions can lead to very different interpretations. Worse still, if we write out the mathematical formulas, they are virtually identical except one term! For a linear regression model and a given value of the independent variable, the CI and PI confusingly share the same point estimate. How can we (statisticians) better explain the differences between a confidence interval (CI) and a prediction interval (PI)? Sure, one could look up the definition on Wikipedia and memorise the definitions, but the real difficulty is how to communicate this clearly to young students/collaborators/clients without using mathematical formalism.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed